From Input to Inference: How AI Is Shifting My Design Instincts

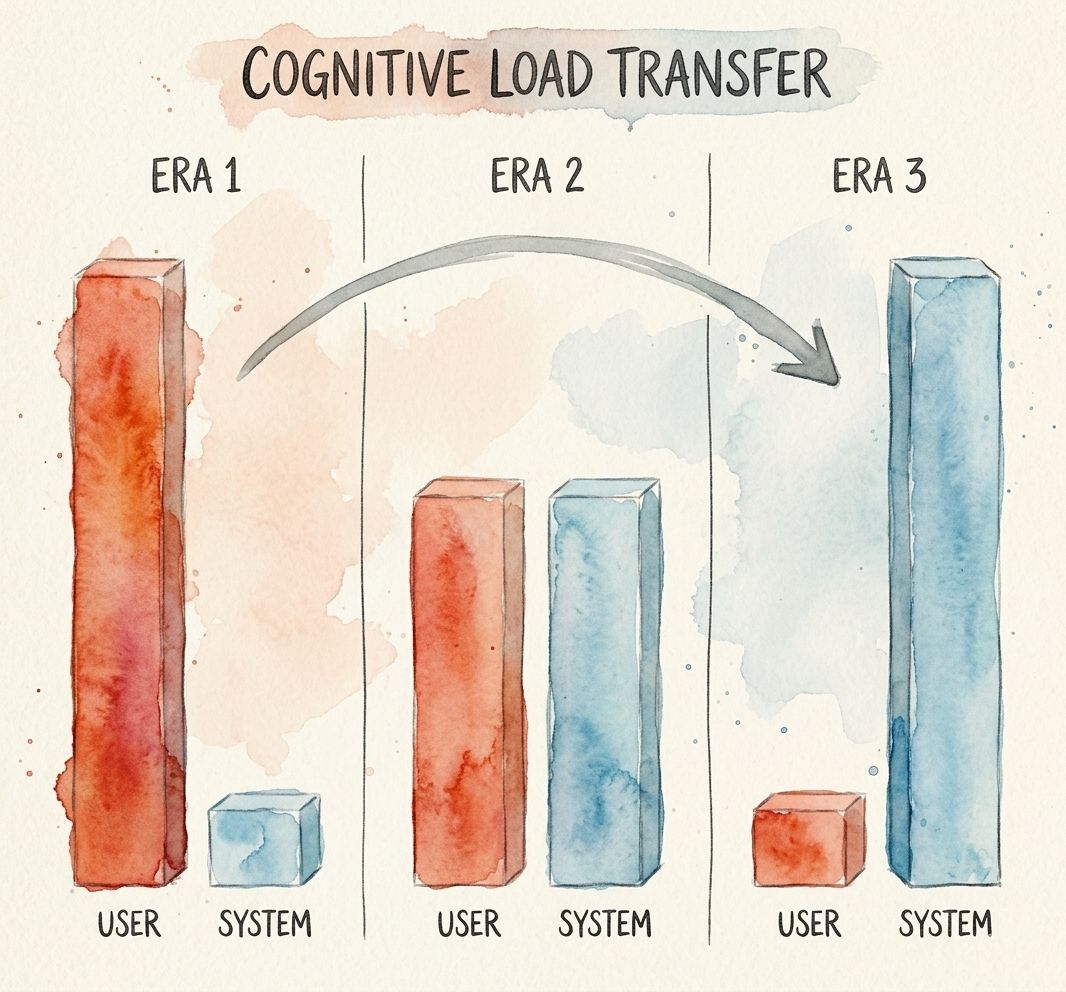

AI isn't changing the principle of good design. It's changing how much of the work the product can take on for the user.

Lately, I've noticed a shift in how I think about designing products.

For a long time, one of the central questions in product design was: what do we need the user to tell us in order to give them something useful? What fields do they need to fill out? What settings do they need to configure? What choices do they need to make before the product can really work?

AI has started to change that frame for me.

More and more, I find myself asking a different question: what can the system figure out on its own so the user doesn't have to? Not because fewer inputs are always better, and not because every product should become a black box, but because AI makes it possible for the product to carry more of the cognitive load than it used to. It doesn't remove the need for interface design. If anything, it changes where the interface should help.

That feels like a meaningful shift: away from designing around input, and more toward designing around inference.

Lets take a walk back in time...

Era One: The User Does Everything

Think about what a simple app looked like in the early-to-mid 2000s. Say you wanted to build something that helped people track their houseplants. You'd build a form. Plant name. Watering frequency. Light level. Soil type. Notes. The user fills it in, the app stores it. That's the transaction.

The same was true in enterprise. Reporting and analytics tools often required users to manually choose metrics, define filters, configure views, or write queries. Getting to the right answer usually meant making a series of explicit decisions.

This wasn't a failure of design ambition, but a constraint of the technology. The system had no way to know to do anything until it was explicitly told to do so. So the user became the data entry layer making every last excruciating decision.

Friction was high, and you had to be either very willing (consumer) or technically savvy (enterprise) to get real value.

Era Two: The System Starts Helping

By the late 2000s, products started getting much better at assistance, and that shift has continued ever since. APIs became more common, integrations got easier, and software could pull in outside data, remember past behavior, and offer smarter defaults.

That plant tracking app could now let you type a species name and pull back care instructions from a botanical database. You didn't have to know the watering schedule; the app could look it up. In enterprise analytics, dropdown menus replaced blank fields, template libraries replaced blank canvases, and suggested metrics started appearing based on your role or past behavior.

Friction dropped. But the user was still driving. They still had to know what to search for. They still had to recognize whether a result or a template was close enough to what they needed, and adjust everything it got wrong. The system helped fill gaps, but the scaffolding was still mostly on the user's side.

Era Three: The System Does the Heavy Lifting

Whats new is the layer on top of that assistance: inference.

You take a photo of your plant sitting on your windowsill. You prompt that you travel a lot and tend to forget to water things. That's it. That's your input. The AI helps interpret the messy parts: the likely conditions, the user's habits, and the kind of care routine that fits their life. The app combines that with plant data, climate, and care guidance to produce a schedule and recommendations that feel tailored instead of generic.

In enterprise, the shift is just as significant. A user opens an analytics tool and types: "Why did conversion dip recently?" The system identifies the relevant metrics, compares recent performance to prior periods, surfaces the segments most likely driving the change, selects an appropriate visualization, constructs the underlying query, and renders a useful starting point before a single dropdown has been touched.

The user provided intent. The system did the inference. AI takes messy human input and turns it into something the product can use. That's the shift that keeps standing out to me.

Where This Is Happening First and Why

One place this shift feels especially visible is enterprise software. Reducing the time users spend on manual configuration has a direct, measurable value. Less time clicking through menus means more time doing actual work.

But the stakes feel high on the consumer side too. Enterprise users often have to use the tools their company buys. Consumer users don't. If your app makes them work harder than they expected, they leave. Consumer products have always competed for attention and patience, and AI raises the bar on what "effortless" can feel like.

The principle I keep coming back to is the same whether you're building a B2B analytics platform or a houseplant tracking app: if the system can reasonably take work off the user's plate, I want it to.

The Mindset Shift: Designing for Inference

In era one, you designed inputs and outputs. In era two, you designed retrieval. In era three, you're increasingly designing inference.

The question I find myself asking is no longer just "what do I need to ask the user?" It's "what can the system figure out on its own, and where do I actually need to involve the user?"

If the system can infer something reliably, infer it. If the system can look something up, look it up. The user's attention is one of the most valuable resources a product has, so I think it's worth spending carefully.

This doesn't mean building minimal experiences. It means building smarter ones. The goal isn't a blank text box and a hope. It's a guided, intentional interaction that feels effortless because the system is doing work the user used to have to do themselves.

The Transparency Principle: Show Your Work

What I keep coming back to is that inference alone doesn't create a good experience. If the system surfaces an answer without showing the user what it inferred or why, it starts to feel opaque. Opacity hurts trust. It hurts usability too.

What feels important to me here is making the system's inference visible. As the system takes on more of the interpretive work, the interface has to show more of what it picked up and acted on. How that exactly plays out depends on the product and the task, but the user should be able to see what the system seems to understand and where they can step in if needed.

Trust grows when people can see the shape of the inference. Usability improves when inferred assumptions are easy to inspect and adjust. The product feels more intelligent because the system is doing meaningful work, and it feels easier to use because that work stays visible.

TLDR;

What feels different to me now is where I start as a designer.

I used to think more in terms of what I needed to ask the user for. Now I think more in terms of what the product can responsibly take on for them. How much of the setup, interpretation, recommendation, or next step can the system handle before it turns back to the user?

The design principle hasn't changed. The execution has. The bar for how much work a product can take off the user is simply higher now.

I think of it as a shift from collecting inputs to helping users express intent and understand inference.

That's the design mindset AI keeps pulling me toward.